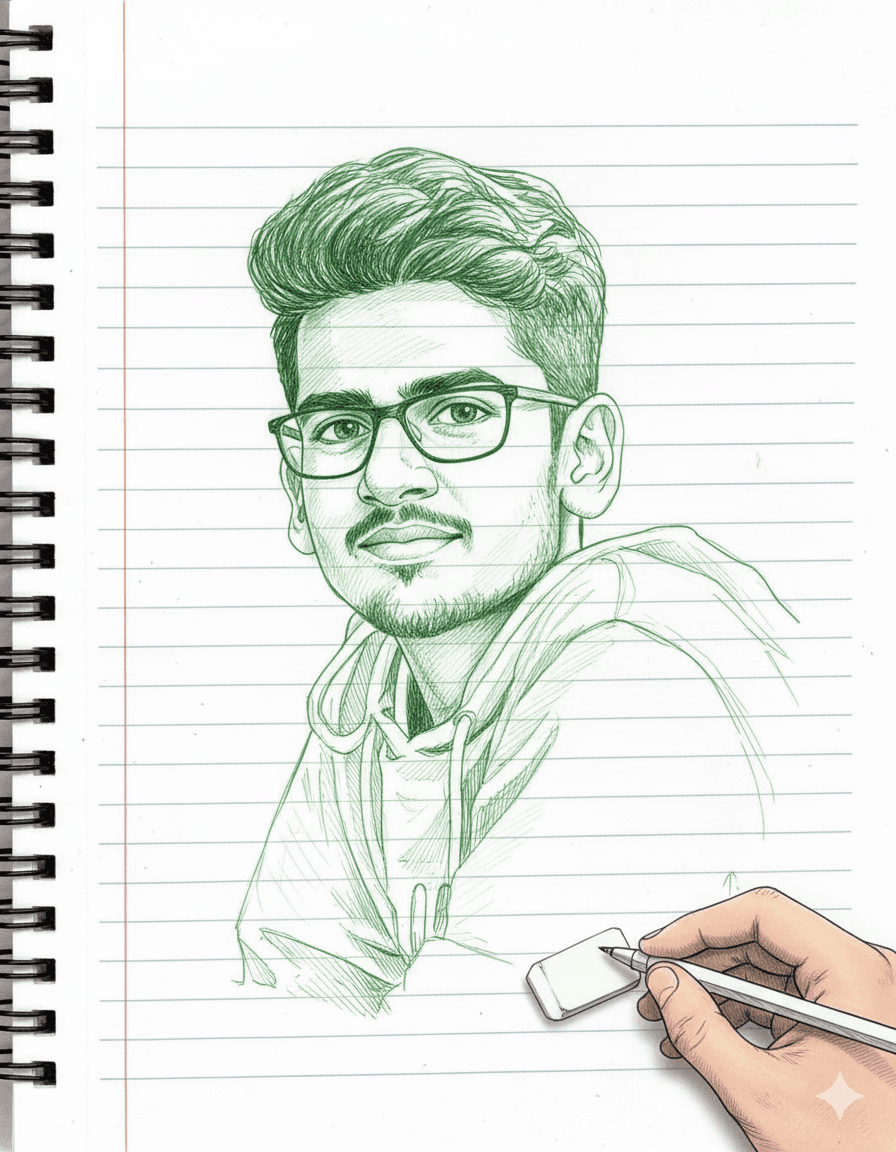

Ganesh Angadi - DevOps Engineer

About Ganesh Angadi

$ man ganesh

System Thinker

Hi, I'm

GANESH ANGADI

DevOps Engineer since 2023. Focused on Docker, Kubernetes, Linux fundamentals, and System Architecture with strong system design expertise.

I don't just build features. I design systems. I think in control flow, model failure states, and design for observability.

📍 Mysuru, Karnataka, India

View Resume →Achievements & Awards

$ history | grep "milestones"

Recognition & Experience

MCP-Based Systems Engineering Hackathon

Built an MCP wrapper around an ISL translator — enabling AI assistant integration. Focused on the integration layer, not reinventing the tool.

AI & Machine Learning Intern

Selected for an AI/ML internship. Working on real projects alongside experienced professionals. Offer ID: OL-2026-VP9K07.

DevOps Projects

$ ls -lh /opt/projects

Things I've Built

DevOps Skills & Technologies

$ skills --level=expert

Tools & Technologies

System Architecture & Engineering Principles

$ cat /etc/sysctl.conf

I don't just build features.

I design systems.

Six principles I actually follow. Hover each one to see where it came from.

Think in control flow

Model failure states

Design for observability

Prefer explicit over magical abstractions

Break systems to understand them

A deploy without a rollback is a gamble

Services

$ systemctl list-units --type=service

How I solve

systems problems

Available for freelance. Hover each card to see how I actually think about the work.

Full Stack Development

Android App Development

DevOps & CI/CD

Infrastructure & Architecture

Contact Ganesh Angadi

$ ping -c 4 ganesh.online

Reach out

What I believe

Infrastructure is not magic — it is decisions with trade-offs. I make those decisions explicit.

I'm still learning. Still breaking things on purpose to understand them. Still questioning every abstraction I reach for.

If you're building something that needs to be reliable, observable, and honest about its failure modes — let's talk.

— Ganesh Angadi

DevOps Engineer · System Thinker · Mysuru, India